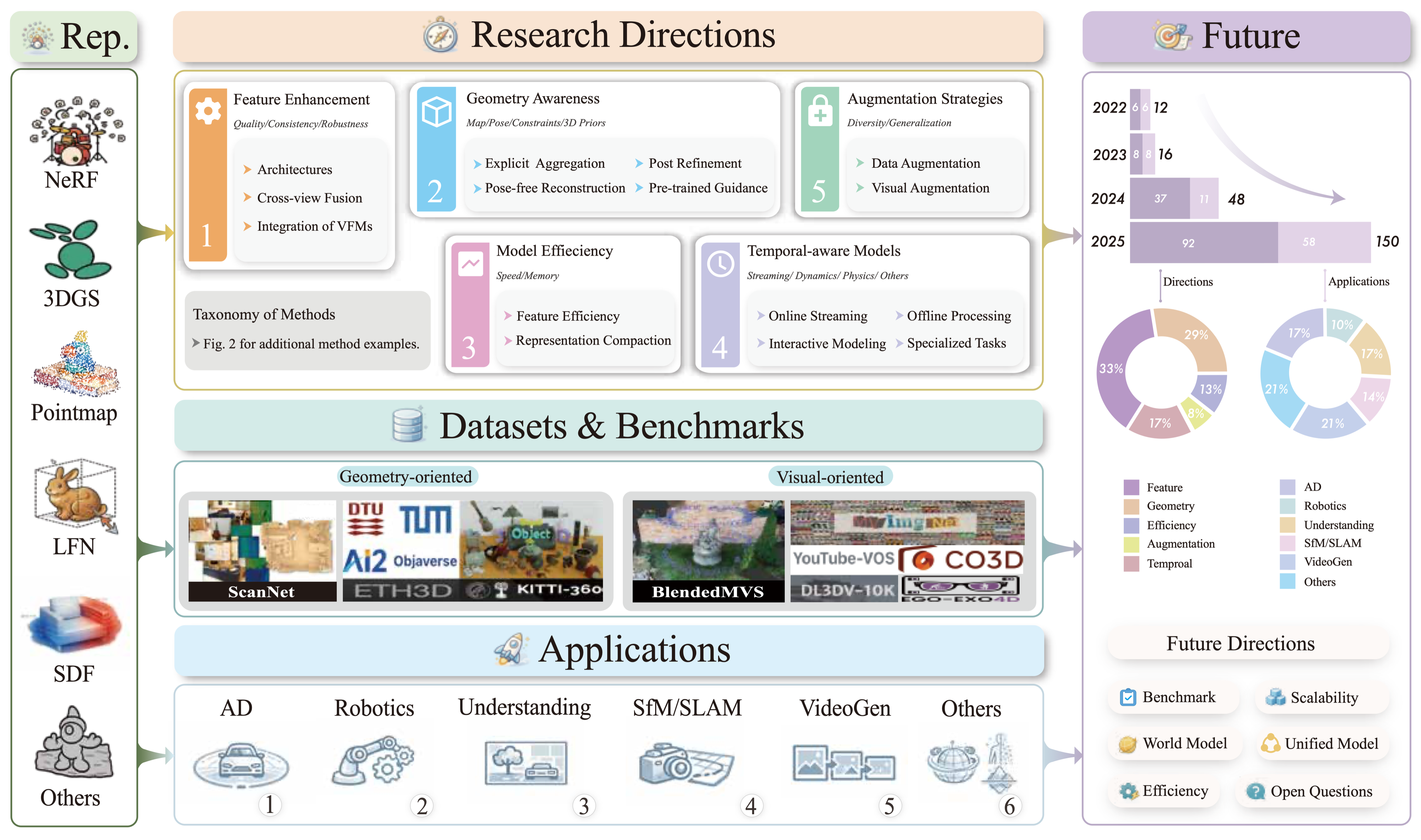

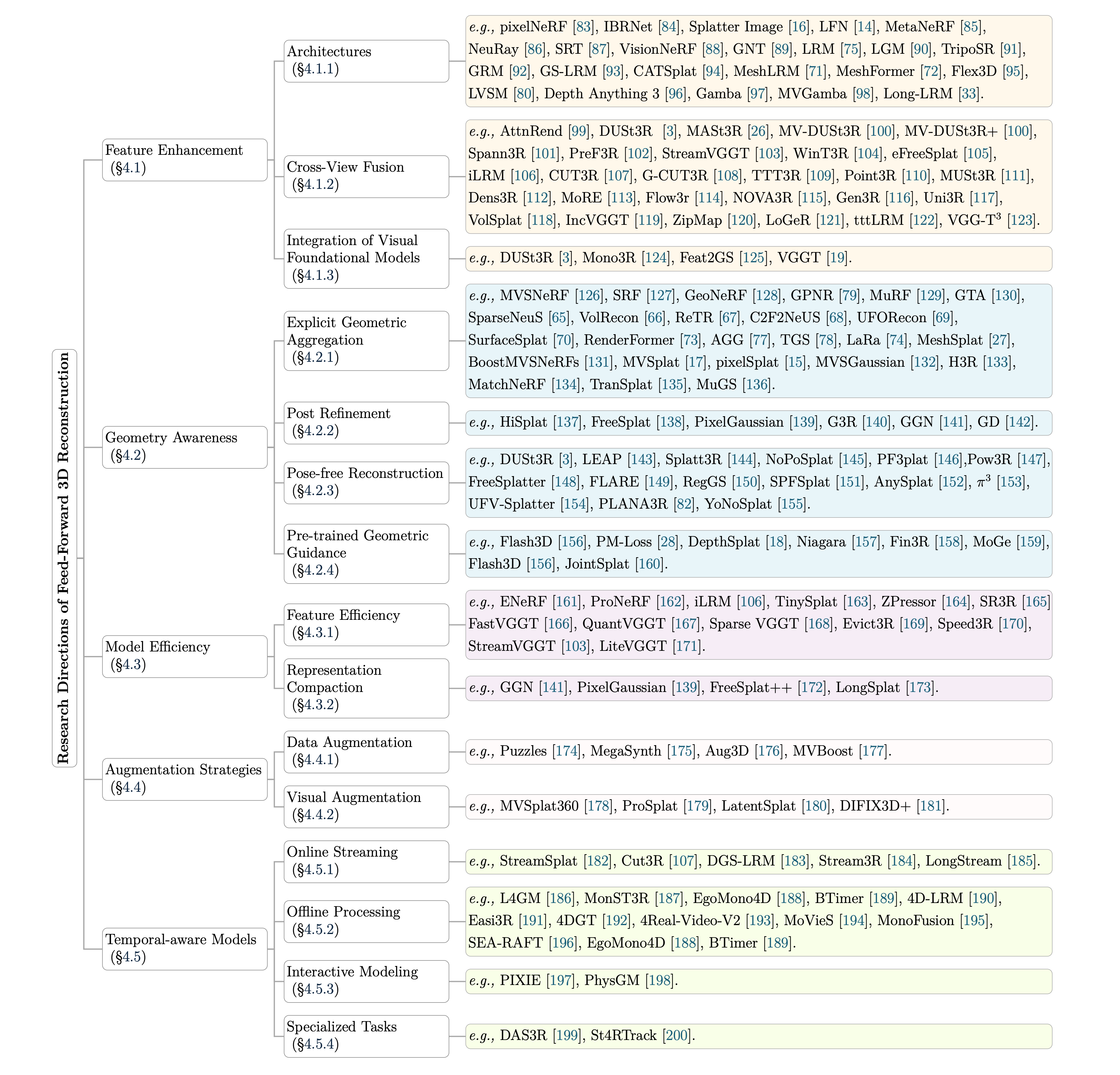

An curated list for feed-forward 3D scene modeling, including research directions, datasets, and applications.

Advanced Encoding Architectures

pixelNeRF: Neural Radiance Fields from One or Few Images. [📄 Paper | 💻 Code ]

IBRNet: Learning Multi-View Image-Based Rendering. [📄 Paper | 💻 Code |🌐 Project Page ]

Splatter Image: Ultra-Fast Single-View 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

Convolutional Occupancy Networks. [📄 Paper | 💻 Code |🌐 Project Page ]

Light Field Networks: Neural Scene Representations with Single-Evaluation Rendering. [📄 Paper | 💻 Code |🌐 Project Page ]

Learned Initializations for Optimizing Coordinate-Based Neural Representations. [📄 Paper | 💻 Code |🌐 Project Page ]

Neural Rays for Occlusion-aware Image-based Rendering. [📄 Paper | 💻 Code |🌐 Project Page ]

${C}^{3}$ -GS: Learning Context-aware, Cross-dimension, Cross-scale Feature for Generalizable Gaussian Splatting. [📄 Paper | 💻 Code ]

Scene Representation Transformer: Geometry-Free Novel View Synthesis Through Set-Latent Scene Representations. [📄 Paper | 💻 Code |🌐 Project Page ]

VisionNeRF: Vision Transformer for NeRF-Based View Synthesis from a Single Input Image. [📄 Paper | 💻 Code |🌐 Project Page ]

RePAST: Relative Pose Attention Scene Representation Transformer. [📄 Paper ]

Is Attention All That NeRF Needs? [📄 Paper | 💻 Code |🌐 Project Page ]

Large Reconstruction Model (LRM).

Instant3D: Fast Text-to-3D with Sparse-view Generation and Large Reconstruction Model.

TripoSR: Fast 3D Object Reconstruction from a Single Image. [📄 Paper ]

GRM: Large Gaussian Reconstruction Model for Efficient 3D Reconstruction and Generation.

GS-LRM: Large Reconstruction Model for 3D Gaussian Splatting. [📄 Paper | 💻 Code |🌐 Project Page ]

MeshLRM: Large Reconstruction Model for High-Quality Meshes.

MeshFormer: High-Quality Mesh Generation with 3D-Guided Reconstruction Model.

Flex3D: Feed-Forward 3D Generation With Flexible Reconstruction Model And Input View Curation.

LVSM: A Fully Data-Driven Approach to Novel View Synthesis.

Depth Anything 3: Recovering the Visual Space from Any Views.

Gamba: Marry Gaussian Splatting With Mamba for Single-View 3D Reconstruction.

MVGamba: Unify 3D Content Generation as State Space Sequence Modeling.

Long-LRM: Long-sequence Large Reconstruction Model for Wide-coverage Gaussian Splats. [📄 Paper | 💻 Code |🌐 Project Page ]

AttnRend: Learning to Render Novel Views from Wide-Baseline Stereo Pairs. [📄 Paper | 💻 Code |🌐 Project Page ]

Epipolar-Free 3D Gaussian Splatting for Generalizable Novel View Synthesis. [📄 Paper | 💻 Code |🌐 Project Page ]

LGM: Large Multi-View Gaussian Model for High-Resolution 3D Content Creation.

DUSt3R: Geometric 3D Vision Made Easy. [📄 Paper | 💻 Code |🌐 Project Page ]

Grounding Image Matching in 3D with MASt3R. [📄 Paper | 💻 Code |🌐 Project Page ]

MV-DUSt3R: Multi-View Dense Stereo 3D Reconstruction.

MV-DUSt3R+: Single-Stage Scene Reconstruction from Sparse Views In 2 Seconds. [📄 Paper | 💻 Code |🌐 Project Page ]

PreF3R: Pose-Free Feed-Forward 3D Gaussian Splatting from Variable-length Image Sequence. [📄 Paper | 💻 Code |🌐 Project Page ]

3D Reconstruction with Spatial Memory. [📄 Paper | 💻 Code |🌐 Project Page ]

Fast3R: Towards 3D Reconstruction of 1000+ Images in One Forward Pass. [📄 Paper | 💻 Code |🌐 Project Page ]

MUSt3R: Multi-view Network for Stereo 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

WinT3R: Window-Based Streaming Reconstruction with Camera Token Pool. [📄 Paper ]

Continuous 3D Perception Model with Persistent State. [📄 Paper | 💻 Code |🌐 Project Page ]

VolSplat: Rethinking Feed-Forward 3D Gaussian Splatting with Voxel-Aligned Prediction. [📄 Paper ]

G-CUT3R: Guided 3D Reconstruction with Camera and Depth Prior Integration. [📄 Paper ]

TTT3R: 3D Reconstruction as Test-Time Training. [📄 Paper ]

Point3R: Streaming 3D Reconstruction with Explicit Spatial Pointer Memory. [📄 Paper ]

VGGT: Visual Geometry Grounded Transformer. [📄 Paper | 💻 Code |🌐 Project Page ]

iLRM: An Iterative Large 3D Reconstruction Model. [📄 Paper | 💻 Code |🌐 Project Page ]

Dens3r: A Foundation Model for 3D Geometry Prediction. [📄 Paper ]

MoRE: 3D Visual Geometry Reconstruction Meets Mixture-of-Experts. [📄 Paper ]

Uni3R: Unified 3D Reconstruction and Semantic Understanding via Generalizable Gaussian Splatting from Unposed Multi-view Images. [📄 Paper ]

Flow3r: Factored Flow Prediction for Scalable Visual Geometry Learning. [📄 Paper ]

Gen3R: 3D Scene Generation Meets Feed-Forward Reconstruction. [📄 Paper ]

NOVA3R: Non-pixel-aligned Visual Transformer for Amodal 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

IncVGGT: Incremental VGGT for Memory-Bounded Long-Range 3D Reconstruction.

ZipMap: Linear-Time Stateful 3D Reconstruction with Test-Time Training. [📄 Paper ]

LoGeR: Long-Context Geometric Reconstruction with Hybrid Memory. [📄 Paper ]

tttLRM: Test-Time Training for Long Context and Autoregressive 3D Reconstruction. [📄 Paper ]

VGG-T3: Offline Feed-Forward 3D Reconstruction at Scale. [📄 Paper ]

Integration of Visual Foundation Models

DUSt3R: Geometric 3D Vision Made Easy. [📄 Paper | 💻 Code |🌐 Project Page ]

Mono3R: Exploiting Monocular Cues for Geometric 3D Reconstruction. [📄 Paper ]

Feat2GS: Probing Visual Foundation Models with Gaussian Splatting. [📄 Paper | 💻 Code |🌐 Project Page ]

CATSplat: Context-Aware Transformer with Spatial Guidance for Generalizable 3D Gaussian Splatting from A Single-View Image. [📄 Paper ]

Geometry-aware Improvement Explicit Geometric Aggregation

MVSNeRF: Fast Generalizable Radiance Field Reconstruction from Multi-View Stereo. [📄 Paper | 💻 Code |🌐 Project Page ]

Stereo Radiance Fields (SRF): Learning View Synthesis for Sparse Views of Novel Scenes. [📄 Paper | 💻 Code |🌐 Project Page ]

GeoNeRF: Generalizing NeRF with Geometry Priors. [📄 Paper | 💻 Code |🌐 Project Page ]

BoostMVSNeRFs: Boosting MVS-based NeRFs to Generalizable View Synthesis in Large-Scale Scenes.

Generalizable Patch-Based Neural Rendering. [📄 Paper | 💻 Code |🌐 Project Page ]

MatchNeRF: Explicit Correspondence Matching for Generalizable Neural Radiance Fields. [📄 Paper | 💻 Code |🌐 Project Page ]

GTA: A Geometry-Aware Attention Mechanism for Multi-View Transformers. [📄 Paper | 💻 Code |🌐 Project Page ]

MuRF: Multi-Baseline Radiance Fields. [📄 Paper | 💻 Code |🌐 Project Page ]

SparseNeuS: Fast Generalizable Neural Surface Reconstruction from Sparse Views.

VolRecon: Volume Rendering of Signed Ray Distance Functions for Generalizable Multi-View Reconstruction.

ReTR: Modeling Depth Distribution for Generalizable Surface Reconstruction.

UFORecon: Generalizable Sparse-View Surface Reconstruction from Arbitrary and Unfavorable Sets.

SurfaceSplat: Connecting Surface Reconstruction and Gaussian Splatting. [📄 Paper ]

RenderFormer: Transformer-based Neural Rendering of Triangle Meshes with Global Illumination. [📄 Paper | 💻 Code |🌐 Project Page ]

AGG: Amortized Generative 3D Gaussians for Single Image to 3D. [📄 Paper ]

TGS: Triplane Meets Gaussian Splatting: Fast and Generalizable Single-View 3D Reconstruction with Transformers.

LaRa: Efficient Large-Baseline Radiance Fields. [📄 Paper | 💻 Code |🌐 Project Page ]

MeshSplat: Generalizable Sparse-View Surface Reconstruction via Gaussian Splatting. [📄 Paper ]

TranSplat: Geometry-Aware Feed-Forward Gaussian Splatting with Transformation Consistency.

H3R: Hybrid Multi-view Correspondence for Generalizable 3D Reconstruction. [📄 Paper ]

MuGS: Multi-Baseline Generalizable Gaussian Splatting Reconstruction.

pixelSplat: 3D Gaussian Splats from Image Pairs for Scalable Generalizable 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

MVSplat: Efficient 3D Gaussian Splatting from Sparse Multi-View Images. [📄 Paper | 💻 Code |🌐 Project Page ]

MVSGaussian: Fast Generalizable Gaussian Splatting Reconstruction from Multi-View Stereo. [📄 Paper | 💻 Code |🌐 Project Page ]

Refining Predicted 3D Scenes

FreeSplat: Generalizable 3D Gaussian Splatting Towards Free-View Synthesis of Indoor Scenes. [📄 Paper | 💻 Code |🌐 Project Page ]

HiSplat: Hierarchical 3D Gaussian Splatting for Generalizable Sparse-View Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

PixelGaussian: Generalizable 3D Gaussian Reconstruction from Arbitrary Views. [📄 Paper | 💻 Code |🌐 Project Page ]

Gaussian Graph Network: Learning Efficient and Generalizable Gaussian Representations from Multi-view Images. [📄 Paper | 💻 Code |🌐 Project Page ]

Generative Densification: Learning to Densify Gaussians for High-Fidelity Generalizable 3D Reconstruction.

G3R: Gradient Guided Generalizable Reconstruction.

LEAP: Liberate Sparse-view 3D Modeling from Camera Poses. [📄 Paper | 💻 Code |🌐 Project Page ]

Splatt3R: Zero-shot Gaussian Splatting from Uncalibrated Image Pairs. [📄 Paper | 💻 Code |🌐 Project Page ]

No Pose, No Problem: Surprisingly Simple 3D Gaussian Splats from Sparse Unposed Images. [📄 Paper | 💻 Code |🌐 Project Page ]

PF3plat: Pose-Free Feed-Forward 3D Gaussian Splatting. [📄 Paper | 💻 Code |🌐 Project Page ]

FreeSplatter: Pose-free Gaussian Splatting for Sparse-view 3D Reconstruction. [📄 Paper | 💻 Code ]

FLARE: Feed-forward Geometry, Appearance and Camera Estimation from Uncalibrated Sparse Views. [📄 Paper | 💻 Code |🌐 Project Page ]

Pow3R: Empowering Unconstrained 3D Reconstruction with Camera and Scene Priors. [📄 Paper | 💻 Code |🌐 Project Page ]

RegGS: Unposed Sparse Views Gaussian Splatting with 3DGS Registration. [📄 Paper | 💻 Code |🌐 Project Page ]

UFV-Splatter: Pose-Free Feed-Forward 3D Gaussian Splatting Adapted to Unfavorable Views. [📄 Paper | 💻 Code |🌐 Project Page ]

π^3: Scalable Permutation-Equivariant Visual Geometry Learning. [📄 Paper | 💻 Code |🌐 Project Page ]

AnySplat: Feed-forward 3D Gaussian Splatting from Unconstrained Views. [📄 Paper ]

No Pose at All: Self-Supervised Pose-Free 3D Gaussian Splatting from Sparse Views.

SPFSplatV2: Efficient Self-Supervised Pose-Free 3D Gaussian Splatting from Sparse Views. [📄 Paper ]

PLANA3R: Zero-shot Metric Planar 3D Reconstruction via Feed-forward Planar Splatting.

YoNoSplat: You Only Need One Model for Feedforward 3D Gaussian Splatting. [📄 Paper ]

Pre-trained Geometric Guidance

DepthSplat: Connecting Gaussian Splatting and Depth. [📄 Paper | 💻 Code |🌐 Project Page ]

Flash3D: Feed-Forward Generalisable 3D Scene Reconstruction from a Single Image. [📄 Paper | 💻 Code |🌐 Project Page ]

Niagara: Normal-Integrated Geometric Affine Field for Scene Reconstruction from a Single View. [📄 Paper | 💻 Code |🌐 Project Page ]

Revisiting Depth Representations for Feed-Forward 3D Gaussian Splatting. [📄 Paper | 💻 Code |🌐 Project Page ]

Fin3R: Fine-tuning Feed-forward 3D Reconstruction Models via Monocular Knowledge Distillation. [📄 Paper ]

JointSplat: Joint Depth and Flow Priors for Feed-Forward 3D Gaussian Splatting. [📄 Paper ]

Efficient Neural Radiance Fields for Interactive Free-viewpoint Video. [📄 Paper | 💻 Code |🌐 Project Page ]

ProNeRF: Learning Efficient Projection-Aware Ray Sampling for Fine-Grained Implicit Neural Radiance Fields. [📄 Paper | 💻 Code |🌐 Project Page ]

TinySplat: Feedforward Approach for Generating Compact 3D Scene Representation. [📄 Paper ]

ZPressor: Bottleneck-Aware Compression for Scalable Feed-Forward 3DGS. [📄 Paper | 💻 Code |🌐 Project Page ]

FastVGGT: Training-Free Acceleration of Visual Geometry Transformer. [📄 Paper | 💻 Code |🌐 Project Page ]

Quantized Visual Geometry Grounded Transformer. [📄 Paper ]

Faster VGGT with Block-Sparse Global Attention. [📄 Paper ]

Evict3R: Training-Free Token Eviction for Memory-Bounded Streaming Visual Geometry Transformers. [📄 Paper ]

LiteVGGT: Boosting Vanilla VGGT via Geometry-aware Cached Token Merging. [📄 Paper ]

Speed3R: Sparse Feed-forward 3D Reconstruction Models. [📄 Paper ]

SR3R: Rethinking Super-Resolution 3D Reconstruction With Feed-Forward Gaussian Splatting. [📄 Paper ]

Representation Compaction Data & Visual Augmentation

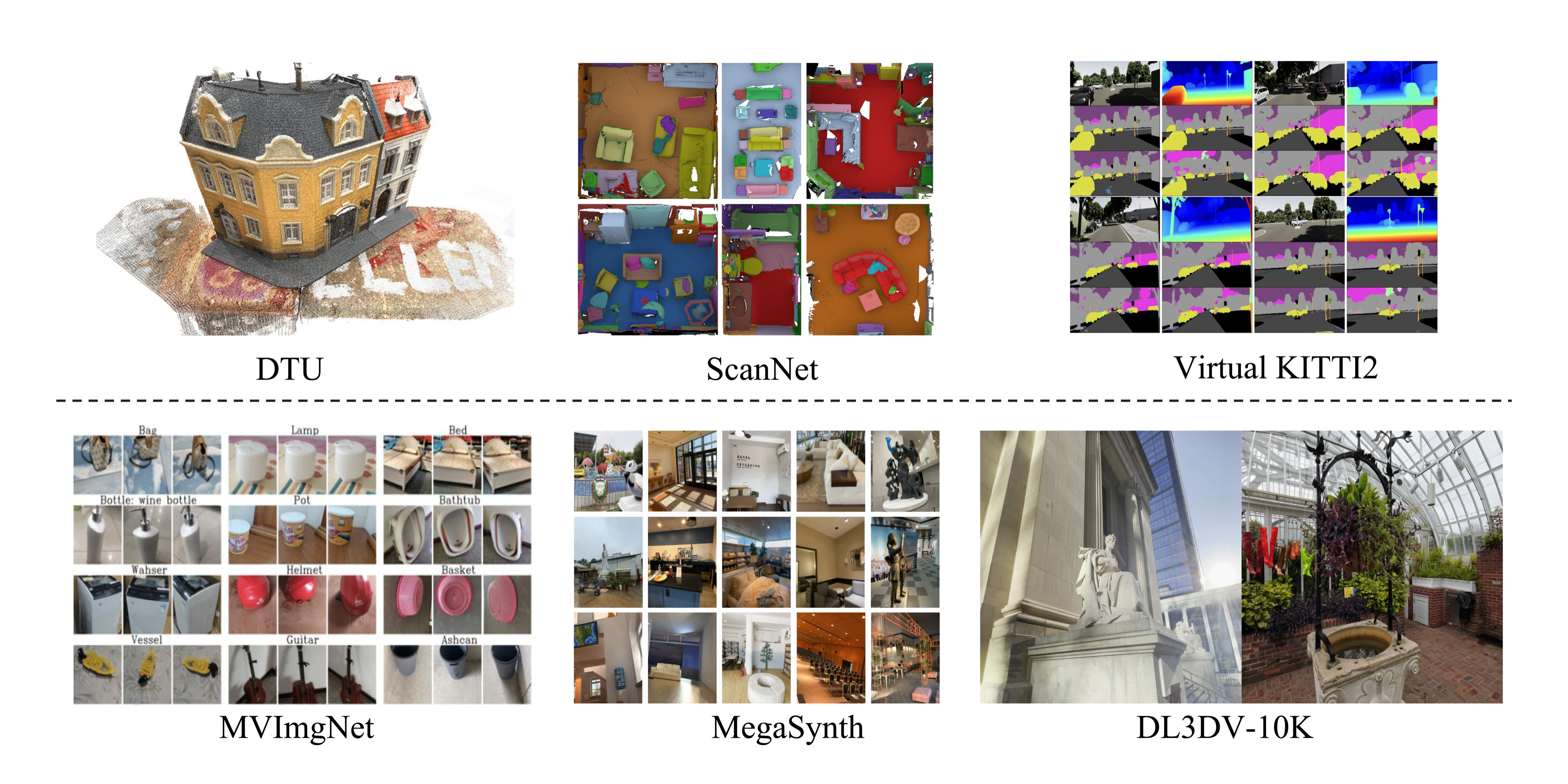

MegaSynth: Scaling Up 3D Scene Reconstruction with Synthesized Data. [📄 Paper | 💻 Code |🌐 Project Page ]

Puzzles: Unbounded Video-Depth Augmentation for Scalable End-to-End 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

Aug3D: Augmenting Large Scale Outdoor Datasets for Generalizable Novel View Synthesis. [📄 Paper ]

MVBoost: Boost 3D Reconstruction with Multi-View Refinement.

MVSplat360: Feed-Forward 360 Scene Synthesis from Sparse Views. [📄 Paper | 💻 Code |🌐 Project Page ]

latentSplat: Autoencoding Variational Gaussians for Fast Generalizable 3D Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

ProSplat: Improved Feed-Forward 3D Gaussian Splatting for Wide-Baseline Sparse Views. [📄 Paper ]

Difix3D+: Improving 3D Reconstructions with Single-Step Diffusion Models.

Reconstruct, Inpaint, Finetune: Dynamic Novel-view Synthesis from Monocular Videos. [📄 Paper ]

StreamSplat: Towards Online Dynamic 3D Reconstruction from Uncalibrated Video Streams. [📄 Paper | 💻 Code ]

Continuous 3D Perception Model with Persistent State. [📄 Paper | 💻 Code |🌐 Project Page ]

DGS-LRM: Real-Time Deformable 3D Gaussian Reconstruction From Monocular Videos. [📄 Paper |🌐 Project Page ]

Stream3R: Scalable Sequential 3D Reconstruction with Causal Transformer. [📄 Paper ]

LongStream: Long-Sequence Streaming Autoregressive Visual Geometry. [📄 Paper ]

L4GM: Large 4D Gaussian Reconstruction Model.

4D-LRM: Large Space-Time Reconstruction Model From and To Any View at Any Time. [📄 Paper ]

MonST3R: A Simple Approach for Estimating Geometry in the Presence of Motion. [📄 Paper | 💻 Code |🌐 Project Page ]

Easi3R: Estimating Disentangled Motion from DUSt3R Without Training. [📄 Paper | 💻 Code |🌐 Project Page ]

4DGT: Learning a 4D Gaussian Transformer Using Real-World Monocular Videos. [📄 Paper | 💻 Code |🌐 Project Page ]

MoVieS: Motion-Aware 4D Dynamic View Synthesis in One Second. [📄 Paper | 💻 Code |🌐 Project Page ]

4Real-Video-V2: Fused View-Time Attention and Feedforward Reconstruction for 4D Scene Generation. [📄 Paper |🌐 Project Page ]

MonoFusion: Sparse-View 4D Reconstruction via Monocular Fusion. [📄 Paper | 💻 Code |🌐 Project Page ]

Self-Supervised Monocular 4D Scene Reconstruction for Egocentric Videos. [📄 Paper ]

Feed-forward Bullet-Time Reconstruction of Dynamic Scenes from Monocular Videos. [📄 Paper ]

PIXIE: Physics from Pixels for Interactive Feed-Forward Scene Modeling. [📄 Paper ]

PhysGM: Physical Gaussian Modeling for Interactive 3D Scene Editing. [📄 Paper ]

DAS3R: Dynamics-Aware Gaussian Splatting for Static Scene Reconstruction. [📄 Paper | 💻 Code |🌐 Project Page ]

St4RTrack: Simultaneous 4D Reconstruction and Tracking in the World.

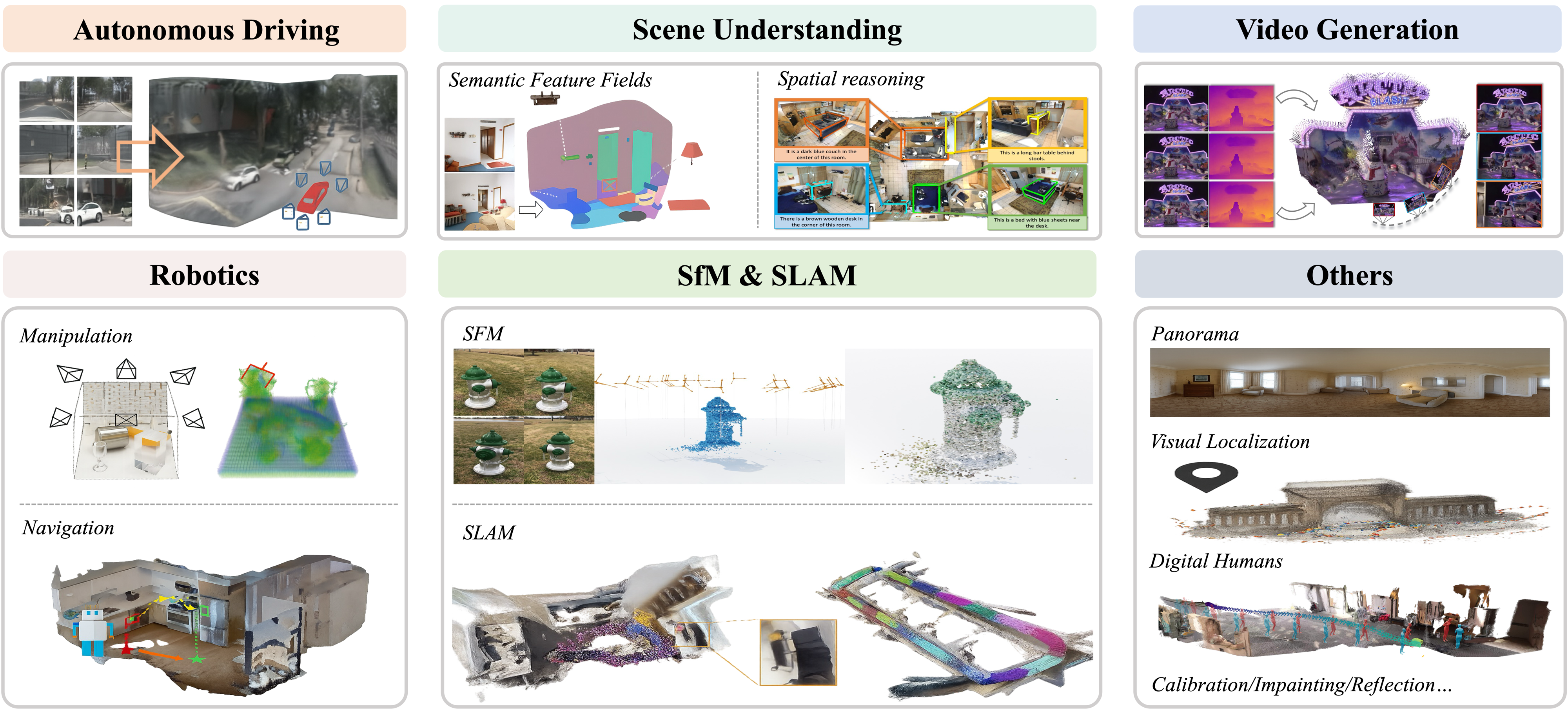

GraspNeRF: Multiview-based 6-DoF Grasp Detection for Transparent and Specular Objects Using Generalizable NeRF. [📄 Paper | 💻 Code |🌐 Project Page ]

ManiGaussian: Dynamic Gaussian Splatting for Multi-task Robotic Manipulation. [📄 Paper | 💻 Code |🌐 Project Page ]

ManiGaussian++: General Robotic Bimanual Manipulation with Hierarchical Gaussian World Model. [📄 Paper | 💻 Code ]

Query-based Semantic Gaussian Field for Scene Representation in Reinforcement Learning. [📄 Paper ]

GAF: Gaussian Action Field as a Dynamic World Model for Robotic Manipulation. [📄 Paper |🌐 Project Page ]

GaussianGrasper: 3D Language Gaussian Splatting for Open-Vocabulary Robotic Grasping. [📄 Paper | 💻 Code ]

EmbodiedSplat: Personalized Real-to-Sim-to-Real Navigation with Gaussian Splats from a Mobile Device. [📄 Paper |🌐 Project Page ]

IGL-Nav: Incremental 3D Gaussian Localization for Image-goal Navigation. [📄 Paper | 💻 Code |🌐 Project Page ]

VR-Robo: A Real-to-Sim-to-Real Framework for Visual Robot Navigation and Locomotion. [📄 Paper | 💻 Code |🌐 Project Page ]

GS-LTS: 3D Gaussian Splatting-Based Adaptive Modeling for Long-Term Service Robots. [📄 Paper |🌐 Project Page ]

UnitedVLN: Generalizable Gaussian Splatting for Continuous Vision-Language Navigation. [📄 Paper ]

Visual Geometry Grounded Deep Structure From Motion. [📄 Paper | 💻 Code |🌐 Project Page ]

Light3R-SfM: Towards Feed-forward Structure-from-Motion. [📄 Paper |🌐 Project Page ]

Mast3r-sfm: A Fully-Integrated Solution for Unconstrained Structure-from-Motion. [📄 Paper | 💻 Code ]

Regist3R: Incremental Registration with Stereo Foundation Model. [📄 Paper | 💻 Code ]

VGGT-Long: Chunk it, Loop it, Align it -- Pushing VGGT's Limits on Kilometer-scale Long RGB Sequences. [📄 Paper | 💻 Code ]

Fast3R: Towards 3D Reconstruction of 1000+ Images in One Forward Pass. [📄 Paper | 💻 Code |🌐 Project Page ]

FLARE: Feed-forward Geometry, Appearance and Camera Estimation from Uncalibrated Sparse Views. [📄 Paper | 💻 Code |🌐 Project Page ]

MASt3R-SLAM: Real-Time Dense SLAM with 3D Reconstruction Priors. [📄 Paper | 💻 Code |🌐 Project Page ]

SLAM3R. [📄 Paper | 💻 Code ]

VGGT-SLAM. [📄 Paper | 💻 Code ]

ARTDECO: Towards Efficient and High-Fidelity On-the-Fly 3D Reconstruction with Structured Scene Representation. [📄 Paper ]

EC3R-SLAM: Efficient and Consistent Monocular Dense SLAM with Feed-Forward 3D Reconstruction. [📄 Paper ]

MASt3R-Fusion: Integrating Feed-Forward Visual Model with IMU, GNSS for High-Functionality SLAM. [📄 Paper ]

ViSTA-SLAM: Visual SLAM with Symmetric Two-view Association. [📄 Paper | 💻 Code |🌐 Project Page ]

SLGaussian: Fast Language Gaussian Splatting in Sparse Views. [📄 Paper ]

GSemSplat: Generalizable Semantic 3D Gaussian Splatting from Uncalibrated Image Pairs. [📄 Paper ]

SegMASt3R: Geometry Grounded Segment Matching. [📄 Paper |🌐 Project Page ]

PartField: Learning 3D Feature Fields for Part Segmentation and Beyond. [📄 Paper | 💻 Code |🌐 Project Page ]

Semantic Gaussians: Open-Vocabulary Scene Understanding with 3D Gaussian Splatting. [📄 Paper | 💻 Code |🌐 Project Page ]

SemanticSplat: Feed-Forward 3D Scene Understanding with Language-Aware Gaussian Fields. [📄 Paper | 💻 Code |🌐 Project Page ]

UniForward: Unified 3D Scene and Semantic Field Reconstruction via Feed-Forward Gaussian Splatting from Only Sparse-View Images. [📄 Paper ]

Large Spatial Model: End-to-end Unposed Images to Semantic 3D. [📄 Paper | 💻 Code |🌐 Project Page ]

AlignGS: Aligning Geometry and Semantics for Robust Indoor Reconstruction from Sparse Views. [📄 Paper |🌐 Project Page ]

Reconstruction-enhanced Video Generation Video Generation-based Scene Reconstruction

Geometry Forcing: Marrying Video Diffusion and 3D Representation for Consistent World Modeling. [📄 Paper | 💻 Code |🌐 Project Page ]

Video World Models with Long-term Spatial Memory. [📄 Paper |🌐 Project Page ]

SteerX: Creating Any Camera-Free 3D and 4D Scenes with Geometric Steering. [📄 Paper | 💻 Code |🌐 Project Page ]

4DNeX: Feed-Forward 4D Generative Modeling Made Easy. [📄 Paper | 💻 Code |🌐 Project Page ]

Lyra: Generative 3D Scene Reconstruction via Video Diffusion Model Self-Distillation. [📄 Paper ]

ShapeGen4D: Towards High Quality 4D Shape Generation from Videos. [📄 Paper |🌐 Project Page ]

EvoWorld: Evolving Panoramic World Generation with Explicit 3D Memory. [📄 Paper ]

WorldForge: Unlocking Emergent 3D/4D Generation in Video Diffusion Model via Training-Free Guidance. [📄 Paper ]

FantasyWorld: Geometry-Consistent World Modeling via Unified Video and 3D Prediction. [📄 Paper ]

Reloc3r: Large-Scale Training of Relative Camera Pose Regression for Generalizable, Fast, and Accurate Visual Localization. [📄 Paper ]

A Scene is Worth a Thousand Features: Feed-Forward Camera Localization from a Collection of Image Features. [📄 Paper ]

Multi-View 3D Point Tracking. [📄 Paper |🌐 Project Page ]

SAIL-Recon: Large SfM by Augmenting Scene Regression with Localization. [📄 Paper | 💻 Code |🌐 Project Page ]

Calibration, Inpainting and Reflection

LoRA3D: Low-Rank Self-Calibration of 3D Geometric Foundation Models. [📄 Paper |🌐 Project Page ]

BevSplat: Resolving Height Ambiguity via Feature-Based Gaussian Primitives for Weakly-Supervised Cross-View Localization.

InstaInpaint: Instant 3D-Scene Inpainting with Masked Large Reconstruction Model. [📄 Paper |🌐 Project Page ]

Reflect3r: Single-View 3D Stereo Reconstruction Aided by Mirror Reflections. [📄 Paper |🌐 Project Page ]